Why No-Code AI Agents Break When You Try to Scale Them

Read this and you’ll understand where no-code AI agents succeed, where they silently fail as you grow, and the practical guardrails to use so you don’t inherit a fragile system that collapses under real-world load.

The no-code promise - and the usual gap with reality

No-code AI platforms sell a simple idea: let non-engineers build AI agents quickly without writing infrastructure or model code. That accelerates proofs of concept and early pilots in many teams. But as usage, data volume, compliance needs, or complexity rise, the very abstraction that made the tool attractive becomes a liability: you lose fine control over model selection, monitoring depth, resource usage and custom integrations.

Below I walk through the specific failure modes you’ll hit as you scale and give practical ways to spot or prevent them.

Core scalability problems no-code platforms struggle with

No-code platforms hide complexity, but they can’t eliminate it. Common issues that appear as you scale include:

Failure Mode | Scalability Impact |

|---|---|

Data & Pipeline Bottlenecks | Reduced throughput and validation errors as data variety grows |

Resource Inefficiency | Unpredictable costs and performance due to lack of compute control |

Integration Friction | Brittle connections to internal systems and proprietary formats |

Vendor Lock-in | Feature constraints and dependency on the provider's roadmap |

Data and pipeline bottlenecks: ingestion throughput and data validation controls often aren’t built for large, varied datasets.

Resource inefficiency: limited control over compute allocation and memory management leads to high costs and unpredictable performance, a challenge addressed in the AWS Well-Architected Lens on resource efficiency .

Integration friction: connecting to multiple internal systems, streaming sources, and proprietary file formats becomes brittle over time.

Vendor lock-in and upgrade risk: dependence on a provider’s roadmap constrains feature timelines and fixes.

Each of these problems shows up in metrics you already measure: rising end-to-end latency, growing error rates, ballooning cloud bills, and longer mean time to remediate incidents.

State management and context persistence: the hidden failure mode

Keeping consistent state across multi-turn conversations and distributed services is notoriously hard. No-code agents often misuse ephemeral context windows rather than durable storage, causing inconsistent answers, repeated questions, and hallucinations when interactions span many steps. Libraries and frameworks explicitly call out the need for “memory” and state management in agent design; without that, agents lose track of prior facts and user intent, as detailed in LangChain’s memory modules overview .

Example consequence: a customer who completed KYC in an earlier session is asked again and then receives contradictory responses because the platform didn’t persist the earlier state.

The observability paradox - you can’t fix what you can’t see

No-code solutions promise simplicity, but they frequently hide prompt decisions, retry logic, and intermediate outputs behind dashboards that don’t expose root causes. This leads to an “observability paradox”: systems that are easy to run in proof-of-concept become opaque in production. Without full traces of each prompt, model output, and downstream decision logic, you can’t diagnose why an agent fails or drifts. There is a need for rigorous logging, tracing, and explainability features that many no-code platforms don’t provide out of the box.

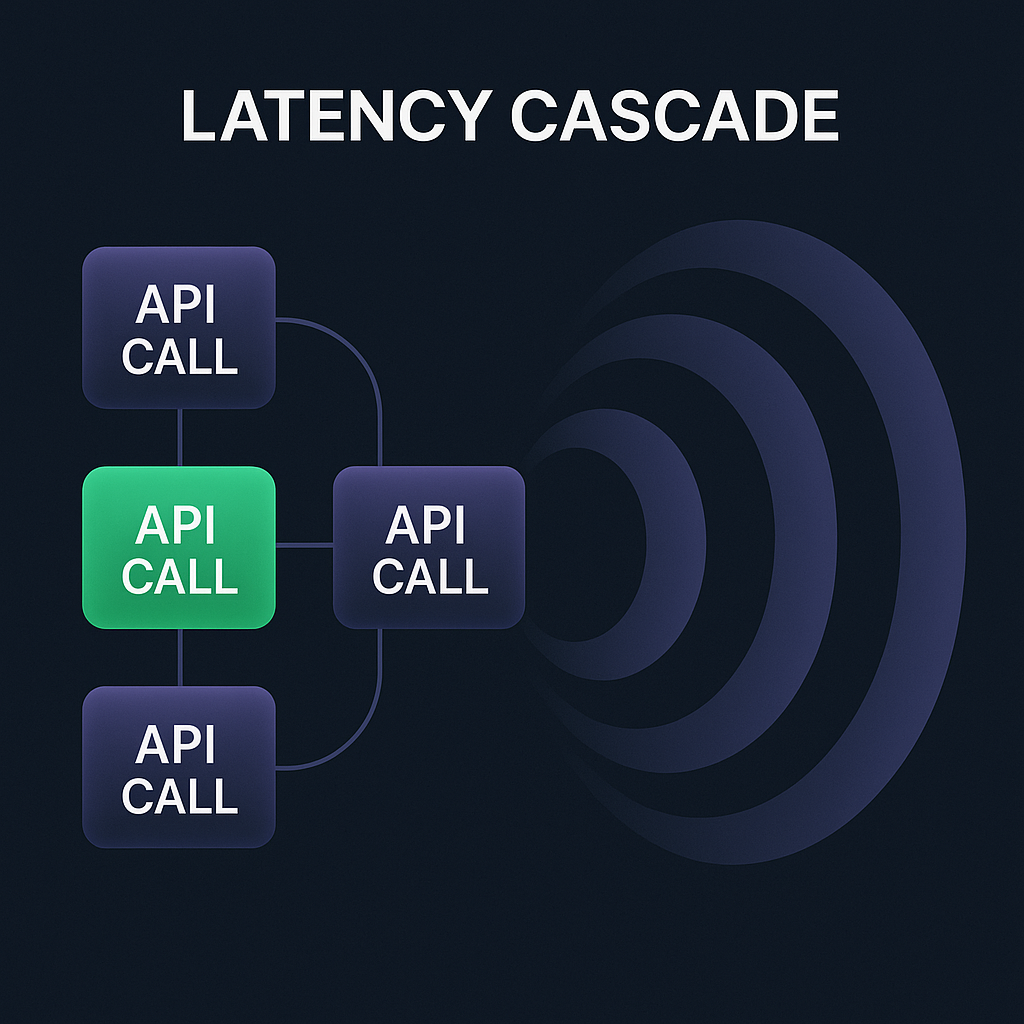

Latency cascades in agent chains

When you chain multiple agents or API calls, each additional call adds to end-to-end latency. At small scale this is tolerable; at scale, it becomes catastrophic. Research from Google demonstrates how call chains amplify tail latency - small delays early in a sequence cause large, user-facing slowdowns - detailed in their study on service chaining and latency amplification . No-code platforms that orchestrate sequential agent calls without parallelism or careful SLO planning create “latency cascades” that worsen as concurrent users grow.

Symptom: average response times remain acceptable, but tail latency (95–99th percentile) spikes, resulting in timeouts or retried calls.

Model drift - accuracy that degrades silently

As data distributions shift over time, models will naturally change behavior. No-code vendors rarely include built-in drift detection, automated retraining pipelines, or alerting for performance degradation. Without that infrastructure, accuracy erodes quietly and business users lose trust.

Practical test: track model output quality over time with labeled validation samples and set alerts for sudden drops in key metrics.

Hallucination amplification across multi-agent systems

Large language models can fabricate facts or “hallucinate.” When you build multi-agent workflows where one agent’s output feeds the next, a single hallucination can propagate and compound across the chain. Surveys and analyses demonstrate hallucination as a measurable phenomenon in modern generative models, such as this ArXiv paper on hallucination in LLMs . No-code platforms rarely provide built-in guardrails to validate or constrain outputs at each step, so hallucinations become amplified and harder to trace back to the origin.

The citizen-developer ceiling: why no-code stops being no-code

In early stages, product teams can build useful automations with no-code tooling. But as you scale, you hit a ceiling: to tune prompts, manage token usage, add retries, handle edge cases, and integrate custom logic, you’ll need specialists in prompt engineering, MLOps, and infrastructure. Gartner highlights this shift from “citizen developer” to specialized teams as inevitable in their analysis of the future of low-code development .

Token economics and hidden cost explosions

Many no-code platforms—and their underlying LLM providers—charge per token or per API call. When agents unexpectedly generate longer prompts, retry on failures, or call other agents, costs can spike rapidly. OpenAI’s pricing is strictly token-based, and unmonitored usage at scale can produce large, unexpected bills, as shown on OpenAI’s pricing page . Without caps or real-time monitoring, orchestration layers can invite serious “bill shock.”

Security, compliance and regulatory liability gaps

Regulated industries require audit trails, explainability, and robust data governance. No-code platforms may lack the detailed access logs, data lineage, or documentation demanded by regulations such as the EU AI Act. The European Commission’s regulatory framework for artificial intelligence underscores risk-based governance and transparency requirements many no-code offerings don’t fully support.

The "black box within a black box" problem

You’re often layering an opaque no-code orchestration engine on top of opaque LLMs. This stacks two layers of unexplainability: the orchestrator hides decision paths and the underlying models hide how outputs were formed. Business stakeholders and auditors increasingly demand explainability and traceability; without those, root-cause analysis becomes nearly impossible, as argued in Harvard Business Review’s article on algorithm auditing .

Cold starts, warm-up penalties and burst scaling

Serverless and managed AI platforms can’t always provision capacity instantly at scale. Cold starts—where new instances need time to initialize—cause performance penalties during traffic spikes. When many requests arrive concurrently, platforms may underprovision and suffer degraded performance until resources warm up.

Practical checklist: how to evaluate a no-code AI platform for scaling

Does it provide durable state stores and memory primitives for agents?

Can you export full request/response logs and traces for every agent call?

Are there monitoring tools for model drift and automated alerts?

Does it include cost caps or fine-grained token usage controls tied to billing alerts?

Can you plug in your own models or retraining pipelines when needed?

Does the vendor provide compliance artifacts, data lineage, and audit logs for regulated deployments?

When no-code is the right call - and when to move to code

No-code makes sense for rapid prototyping, validating product assumptions, and empowering domain experts to iterate. But move to code (or hybrid architectures) when you need:

Choosing Your Path

Business Need | No-Code | Custom Code |

|---|---|---|

Speed to Market | High / Rapid Prototyping | Moderate |

Observability & Tracing | Limited / Opaque | Full / Granular |

Cost Management | Variable / Per-token | Optimized / Predictable |

Integration Complexity | Basic / Standard APIs | High / Custom Logic |

Predictable latency and SLOs under load.

Full observability and traceability for audits.

Tight cost control over tokenized pricing.

Retraining pipelines and drift detection infrastructure.

Custom integrations and fine-grained security controls in line with OWASP’s API Security Project .

A practical hybrid approach is to architect a “no-code front door” for fast iteration, backed by a developer-owned core for production-grade pipelines and monitoring, as many enterprise teams recommend.

Quick wins you can implement this week

Add explicit state persistence for multi-turn interactions (session IDs + database) using patterns from LangChain’s memory guide.

Export raw prompts and responses to a central log and enable tracing across agent calls.

Configure billing alerts and set conservative token caps on test environments using Azure Cost Management .

Create simple validation checks on agent outputs before they’re acted on downstream (schema checks, knowledge lookups).

Start collecting labeled quality samples to detect model drift early.

Final word for people scaling AI agents

You’ll get real value from no-code AI—but expect limits. Treat no-code as a fast discovery tool, not the final production architecture for mission-critical flows. Plan early for observability, durable state, cost controls, and compliance so your proof of concept can evolve into a reliable service without painful rework.

Want support for Custom AI Agents?

See our custom AI agent offering and reach out on demand.

Custom AI Agent Development:format(webp))

:format(webp))

:format(webp))

:format(webp))